-

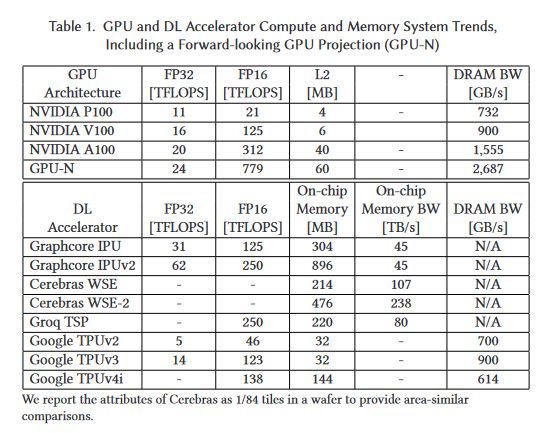

Nvidia Data Center GPU MCM Approach: Composable On-Package Architecture (COPA) Divergence of HPC and DL GPUs by modifying I/O and cache, depending on the workload, using chiplets. (1/x)

-

DL Bottlenecks (2/x)

-

Ballooning LLC Requirements for DL Workloads (3/x)

-

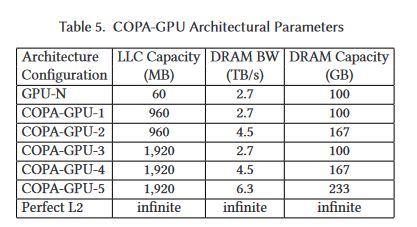

Bandwidth and Efficiency of 2.5D and 3D Packaging 28.7 mm² of silicon area is used for 14.7 TB/s with 3.5D Packaging. Conservative Estimate of 960 MB L3 in 826 mm² (4/x)

-

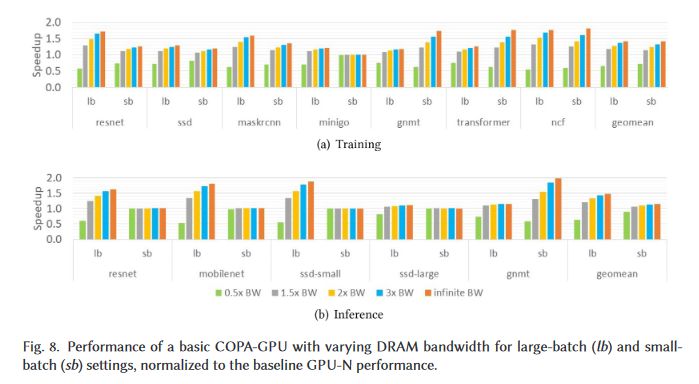

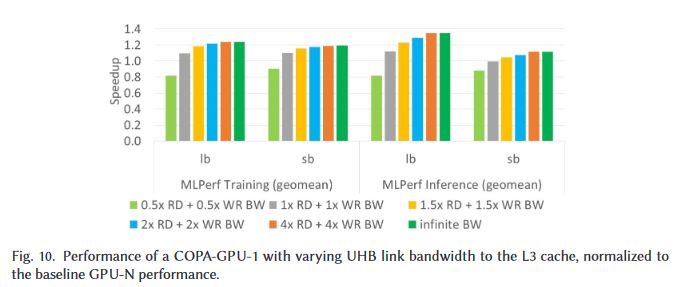

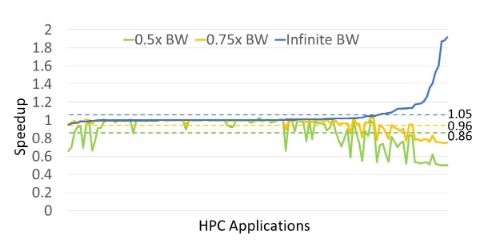

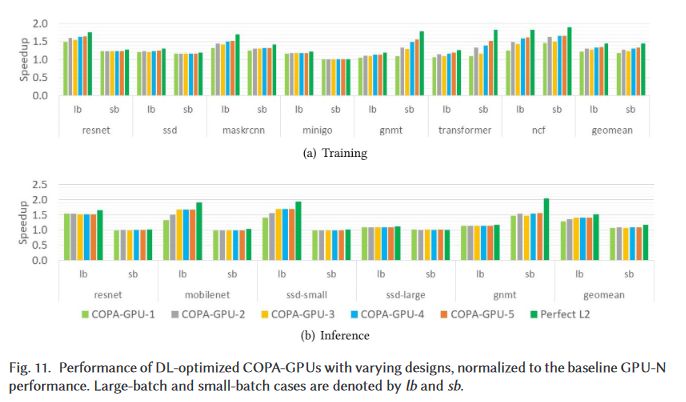

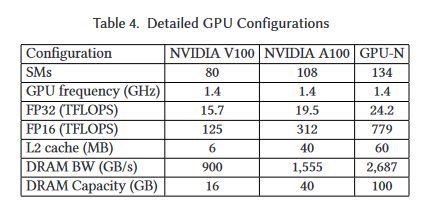

Now, it's time for my favourite, speculation. I believe GPU-N is a single-die GH100, with a total of 144 SMs, with 134 enabled. It seems to have 6144b HBM2e @ 3.5 Gb/s. GPU-N is the HPC version of the GPU, while the various COPA-GPUs (CG) are DL-optimised. (9/x)

-

CG-1 and CG-3 have 6144b HBM2e CG-2 and CG-4 have 12288b HBM2e CG-5 has 14336b HBM2e I don't expect all of these CGs to exist, since it would require both 2.5D and 3D packaging capable GH100 dies. (10/x)

-

As I was worried, it seems Nvidia has decided to focus on DL performance, given the FP32 scaling is only due to increased SM count, and presumably FP64 (HPC) as well. This isn't really conducive to the 3x performance rumour. (11/x)

-

Source: GPU Domain Specialization via Composable On-Package Architecture By: Nvidia, USA (12/x) dl.acm.org/doi/10.1145/3484505

Redfire75369’s Twitter Archive—№ 2,239

Redfire75369’s Twitter Archive—№ 2,239